Building Epidemic Sound’s first GenAI feature

In 2023-2024, Epidemic Sound (in collaboration with Stability) started training data models to generate music using our proprietary music catalog as input.

In early 2025, we finally feel that the quality of output generated is ready for commercial use.

I (product designer) together with 2 frontend engineers and 1 UX researcher were tasked to explore how the user-facing experience could look like.

The Alphas

We worked in 1 week sprints where we launched a new Alpha prototype at the end of every week, which we would test with users and gather feedback that feeds into the next prototype.

We kept it scrappy, lean and really focused on building to learn.

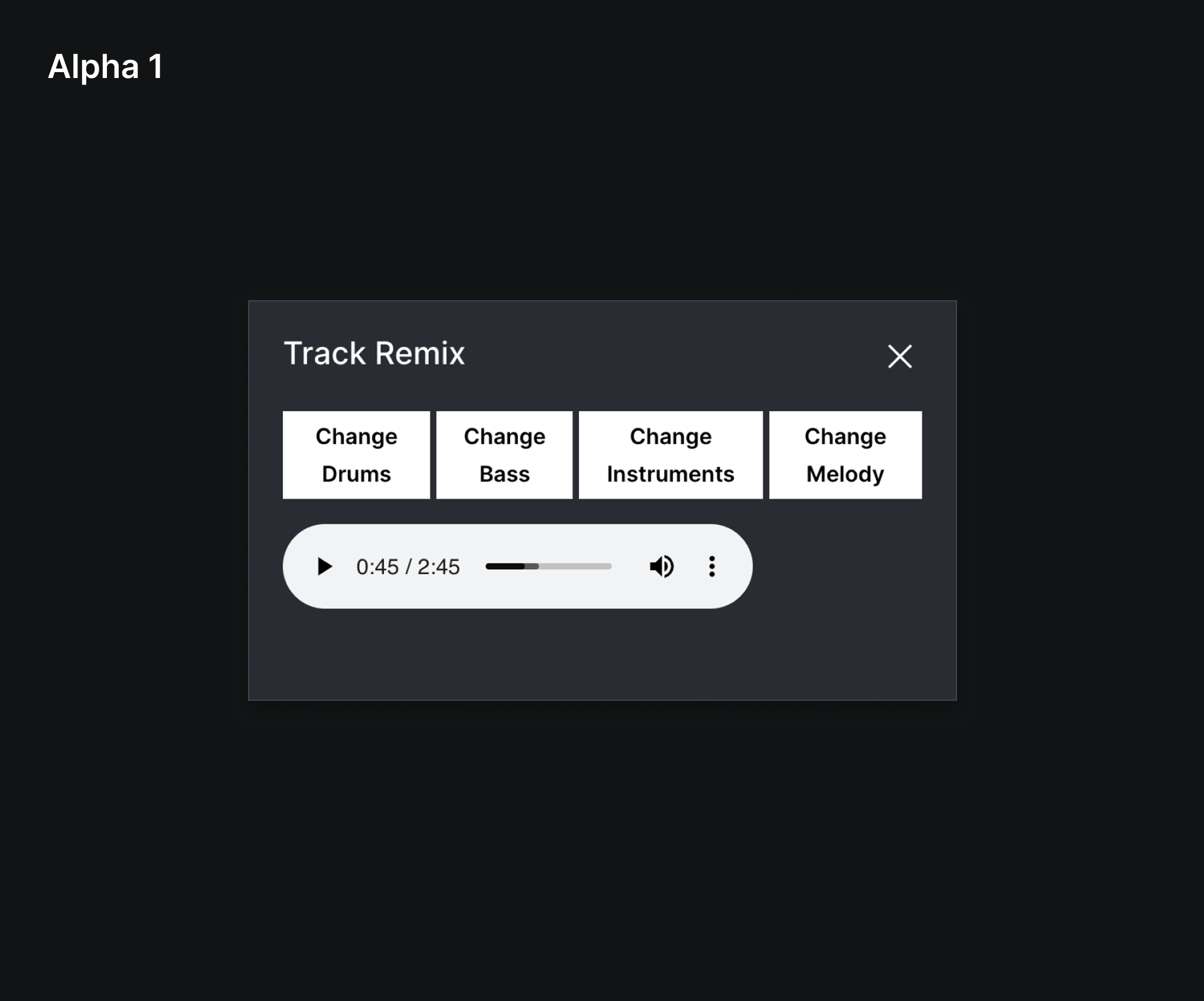

Alpha 1: First take, not much thought put into the UX yet. We just wanted to see it working.

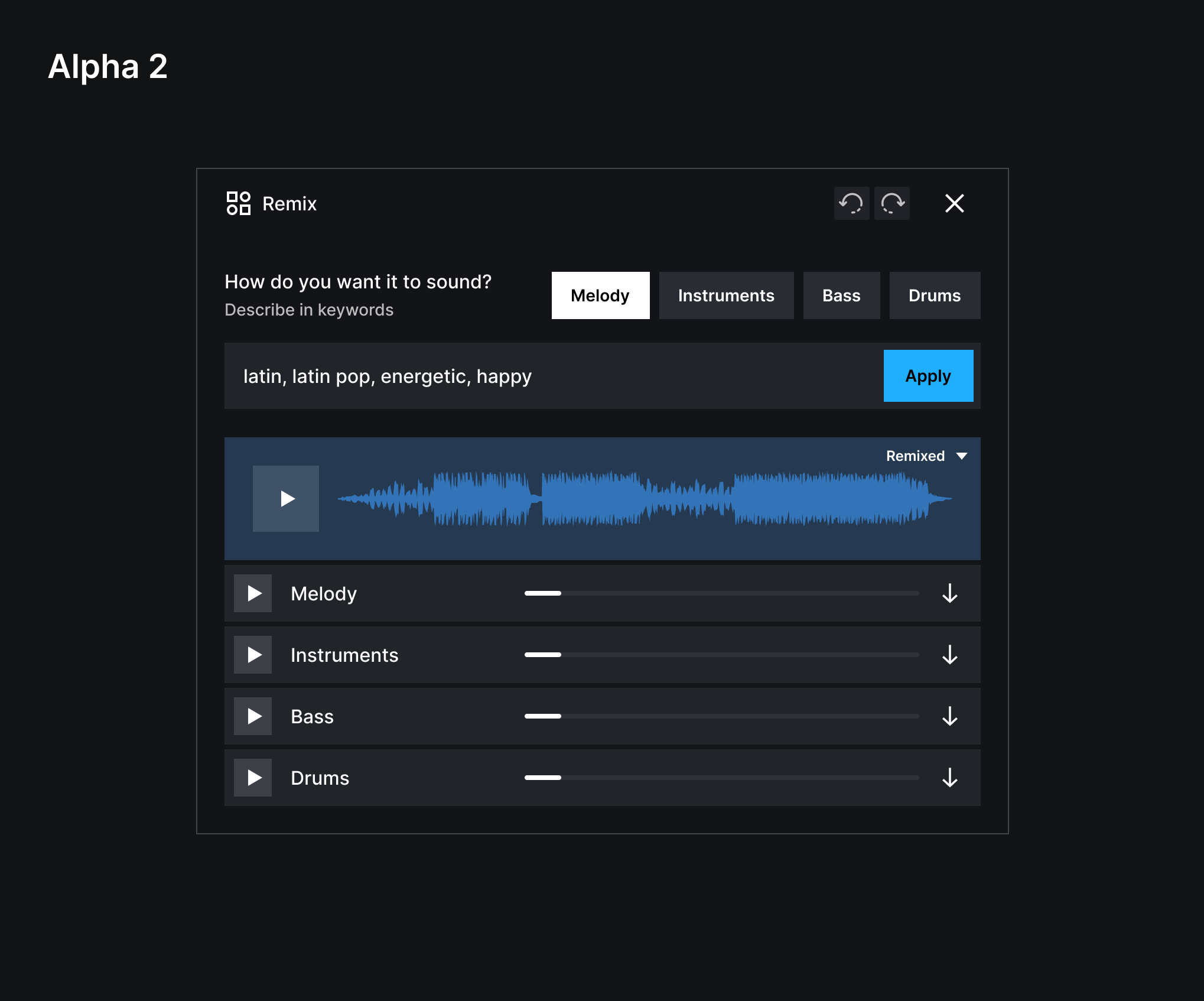

Alpha 2: Added an input field for users to describe the changes they want to make + separating the output audio into stems (instruments). Learning: Users have a hard time describing what they wanted.

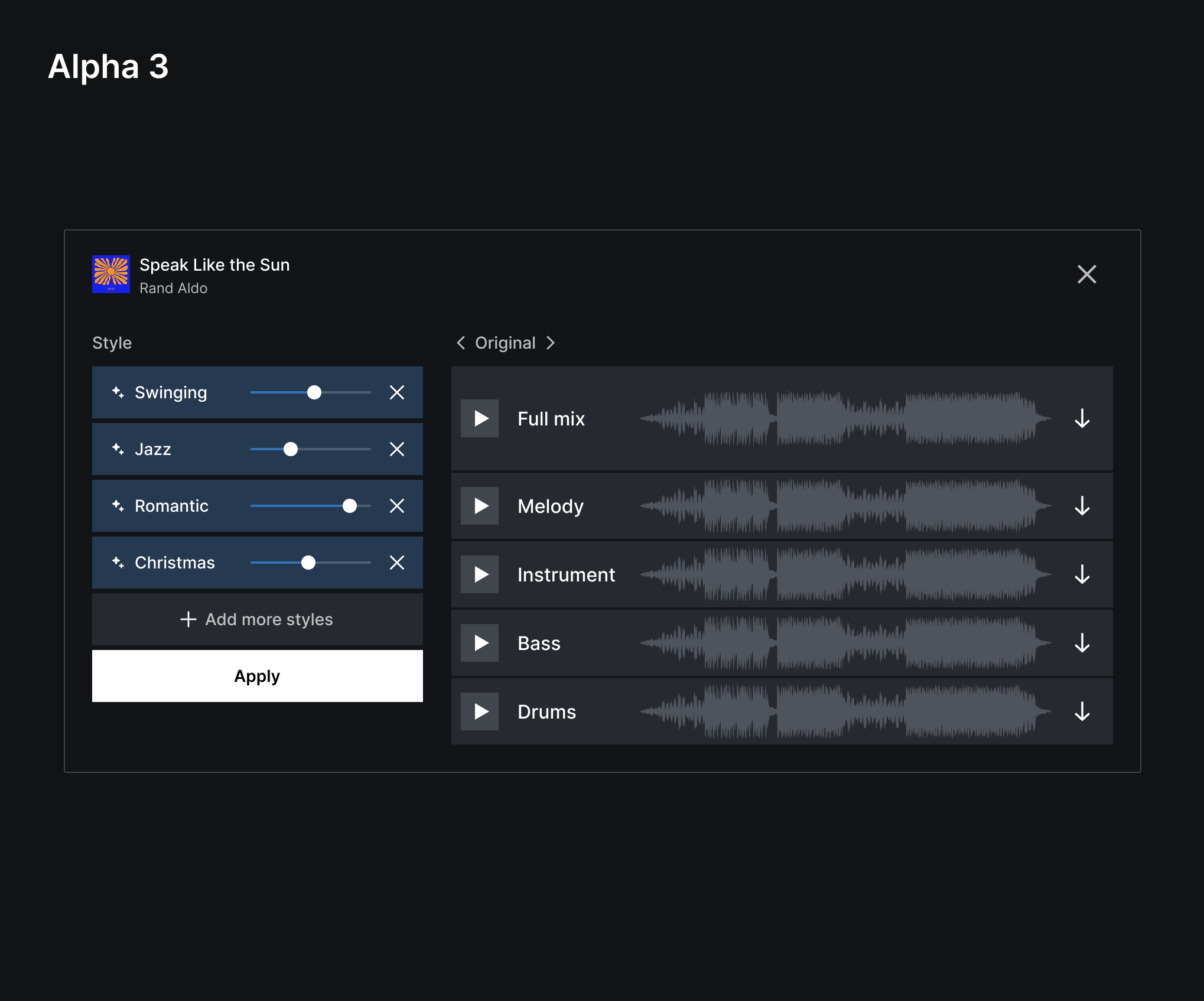

Alpha 3: Instead of free-text input, we tried using keywords + sliders to control input strength. Learning: Users preferred this way of providing input but the results didn’t sound good when they added “Christmas” on a hip-hop song for example. We need to set better guardrails.

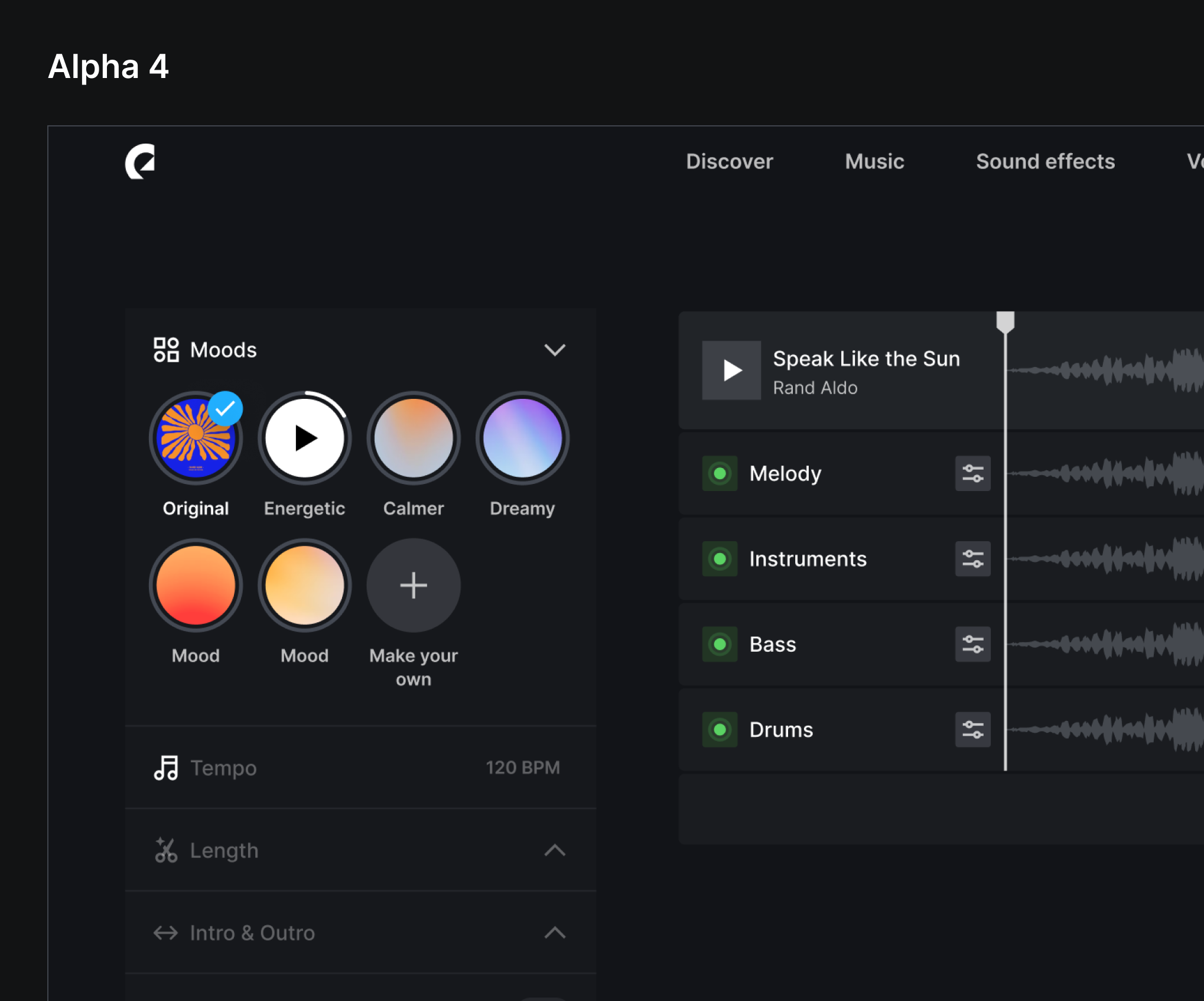

Alpha 4: Evolved the keywords + sliders into pre-defined mood presets which produced better results. Learning: Users found this useful but it’s more of a “nice to have” than a feature that would solve a real pain point.

Learnings after 4 Alphas

We realized that the Alphas we built were heavily tech-driven, with focus on pushing the generative AI agenda. It didn’t really map to users’ actual needs or current workflows. There was also a slightly negative sentiment on AI replacing music created by real musicians.

We took a step back to rethink how the experience could look like if we were to take a users-first approach. When in the users’ workflow is AI generated content useful? How do we keep the essence of human-made music while using AI as a superpower to help alleviate pain points?

Snapshot of insights gathered from Alpha 1-4

Mapping the user journey and where GenAI could fit in

Hitting the nail on the head with Alpha 5

We took a completely new approach based on the feedback we got from users.

Instead of generating completely new melodies or drum tracks in a different style, what users really wanted was to be able to move parts of a track around. This is a big pain point today where they need to manually stitch parts of a track together.

We used generative AI to inpaint the seams so transitions would sound perfect. On top of that, we allowed users to easily turn off any instrument, add mood filters or change intensity at any point in the song.

Alpha 5 Figma prototype

Alpha 5 → Beta → General Availability

When we built Alpha 5, we saw a complete change in sentiment (for the better) with users that we tested with as well as with AI skeptics within our company. This is a path that is true to Epidemic Sound as a company - we’re not in the business to generate music but we can definitely use AI in an elegant way to enhance our product offering which will solve real-world problems that our users face.

Alpha 5 became our Beta. We ran user tests on that to help us define what we should launch to the public. Once that was done, we handed over the work to another team to get the feature ready for general availability.